Our Work

Founded in 2014, the Center on Privacy & Technology is a leader at the intersection of privacy, surveillance, and civil rights.

Latest Work

Privacy Center at the Georgetown Law Tech Mixer

The Privacy Center tabled at the Georgetown Law Tech Mixer, which is an opportunity for organizations to showcase their work to students and see what others are working on at Georgetown.. The Privacy Center had a two staff members, Emerald Tse and Marianna Poyares, who spoke with 25-30 students about the Privacy Center's work and how to get involved. Some mentioned knowing about our work before arriving at Georgetown and others were eager for the chance to apply for our research assistant positions.

“The Department of Homeland Security Is Unlawfully Collecting DNA” article in Lawfare

Director of Research & Advocacy Stevie Glaberson published a piece in Lawfare, "The Department of Homeland Security Is Unlawfully Collecting DNA," describing the Department of Homeland Security’s expansive and unlawful DNA collection regime. Stevie argued that the agency appears to be violating the statutory bounds of its DNA-collecting authority, is violating the constitution, and that consent of those from whom DNA is collected cannot cure these violations.

“Raiding the (U.S. Citizen) Genome” update published

The Privacy Center released new findings about who the Department of Homeland Security has been collecting DNA from. Our latest research, Raiding the (U.S. Citizen) Genome, analyzes records CBP released in response to a Privacy Center FOIA request and found that CBP has knowingly taken DNA from U.S. citizens on a regular basis, with over 2,000 samples taken from citizens between 2020 and 2024. The findings were covered by multiple news outlets including WIRED, The New York Times, The Guardian, Politico’s Morning Tech, and others. This research builds on the Privacy Center’s report Raiding the Genome: How the United States Government Is Abusing Its Immigration Powers to Amass DNA for Future Policing which explained and analyzed the drastic expansion of DNA collection at the Department of Homeland Security. DHS’s DNA collection program operates with essentially no oversight, and the DNA taken is used for future criminal policing and prosecution.

“The Department of Homeland Security Is Unlawfully Collecting DNA” article published

The Center on Privacy & Technology’s Director of Research & Advocacy Stevie Glaberson published a piece in Lawfare, "The Department of Homeland Security Is Unlawfully Collecting DNA" describing the Department of Homeland Security’s expansive and unlawful DNA collection regime. Stevie argued that the agency appears to be violating the statutory bounds of its DNA-collecting authority, is violating the constitution, and that consent of those from whom DNA is collected cannot cure these violations.

New analysis reveals Customs & Border Patrol took DNA from more than 2,000 U.S. citizens between 2020 and 2024

The Privacy Center released an update to their Raiding the Genome: How the United States Government Is Abusing Its Immigration Powers to Amass DNA for Future Policing which found that the government regularly violates federal law by knowingly taking DNA from U.S. citizens, without authority to do so. Read the full press release.

Privacy Center quoted in The Latin Times article about ICE’s bulk collection of data

Associate Emerald Tse was quoted in a The Latin Times article by Pedro Camacho titled "Arrest of Mexican Man in Hawaii Shows ICE is Using Remittance Data To Deport Migrants" about ICE's bulk collection of data. "[ICE] are sidestepping that constitutional requirement," said Tse.

Privacy Center featured on The Take podcast to talk about DHS DNA Collection

Director of Research and Advocacy Stevie Glaberson joined The Take, an Al Jazeera English podcast, to talk about "Raiding the Genome" and DHS DNA collection. The Take produced an audio and a video piece. The Take's YouTube channel, where the video piece ran, has 16.5 million subscribers.

“Migrant Data Extractivism” paper published

Postdoctoral Fritz Fellow Marianna Poyares published a paper on the prestigious academic journal International Migration, published by the International Organization for Migration (IOM-UN). The paper "Migrant Data Extractivism: Tech and Borders at the Limit of Rights", argues that migrant data extractivism is fundamental for understanding new technologies of migration governance, highlighting, among others, the practice of migrant DNA collection uncovered by the Privacy Center's Raiding the Genome report.

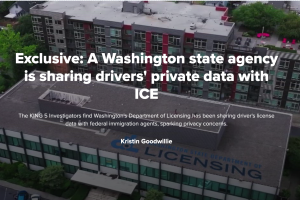

Privacy Center provided background for Seattle’s King 5 investigation

Executive Director Emily Tucker and Associate Emerald Tse spoke on background to a reporter from Seattle's King 5 for an investigation, which revealed that Washington State's Department of Licensing reinstated ICE access to private driver data. This reporting follows the re-release of the Privacy Center's "American Dragnet" report, which details Washington State's previous attempts to block the use of state data for carrying out deportations.

“Raiding the Genome” update published

The Privacy Center released updated findings to our 2024 report about how the Department of Homeland Security’s has become the primary contributor of DNA samples to a national criminal database. One year after publishing “Raiding the Genome,” we shared new information about the continued growth of the DNA database, the pace at which it is growing, and whose DNA is being taken for that database.